In the architecture of digital platforms, rules often appear clear at the surface—minimum ages, safety policies, community standards. Yet beneath those declarations lies a more complex question: how effectively are those rules enforced? That question now sits at the center of growing scrutiny facing Meta across Europe.

A Regulatory Challenge Emerges European regulators have accused Meta of failing to adequately prevent children under 13 from accessing its platforms, including Facebook and Instagram. The findings stem from a lengthy investigation under the Digital Services Act (DSA), a sweeping law designed to hold major tech companies accountable for user safety.

Preliminary conclusions suggest that Meta’s systems for age verification and enforcement are insufficient. Children have reportedly been able to create accounts using false birthdates, while tools to report underage users have been described as ineffective or difficult to use.

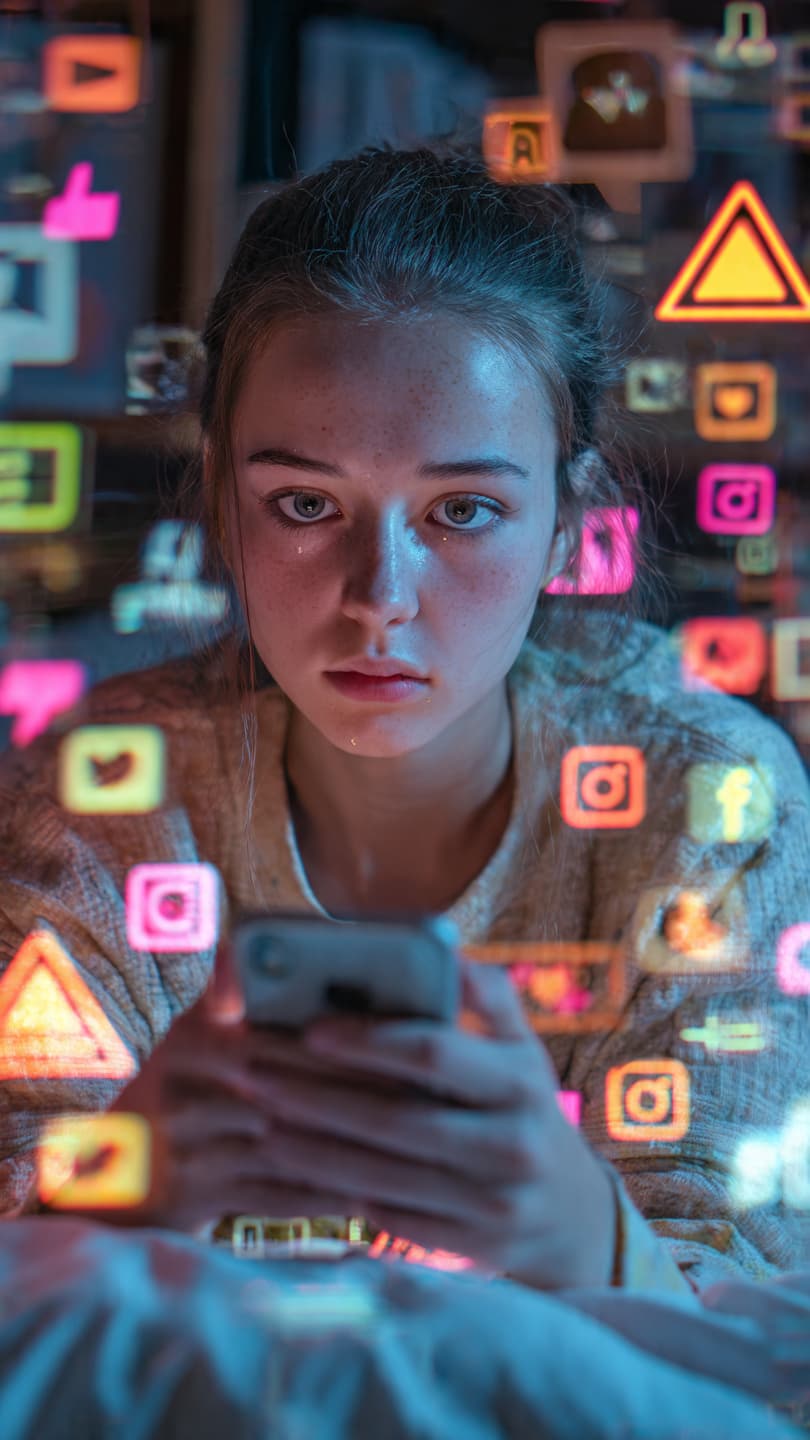

The Scale of the Concern Regulators estimate that a notable share of children under 13 are still present on these platforms—despite rules explicitly prohibiting it. This gap between policy and practice has raised concerns about exposure to risks such as inappropriate content, cyberbullying, or online exploitation.

The European Commission has emphasized that simply stating rules is not enough; companies must demonstrate that those rules are actively enforced in real-world conditions.

Meta’s Response Meta has pushed back against the accusations, arguing that age verification is a complex, industry-wide challenge. The company says it already deploys systems to detect and remove underage users and continues to invest in new technologies.

At the same time, Meta has indicated willingness to cooperate with regulators and refine its approach—an acknowledgment that the issue extends beyond a single platform to the broader design of online identity systems.

What Could Happen Next The findings are not yet final, but the potential consequences are significant. Under the DSA, violations could lead to fines of up to 6% of global annual revenue—a figure that could reach billions of dollars.

Beyond financial penalties, the case may shape future rules across Europe. Policymakers are already քննարկing stricter age limits and stronger verification systems, reflecting a wider shift toward protecting younger users in digital spaces.

The issue is not simply whether children are present on social media—it is how responsibility for that presence is defined and enforced. As regulators press for clearer answers, the outcome may influence not only one company, but the broader expectations placed on platforms that host the next generation of users. AI Image Disclaimer Illustrations are AI-generated and intended for conceptual representation only.

Source Check — Credible Media Presence Reuters · BBC · The Guardian · AP News · The Verge

Note: This article was published on BanxChange.com and is powered by the BXE Token on the XRP Ledger. For the latest articles and news, please visit BanxChange.com