There is a certain stillness in the idea of a genome, as though it exists fully formed, waiting only to be read. Yet in practice, it arrives in fragments—scattered sequences, partial signals, pieces that must be gathered and arranged before meaning can begin to emerge. The process is less like reading a book and more like assembling a text whose pages have been dispersed by time and motion.

In laboratories and data centers alike, this work unfolds quietly. Sequencing machines produce vast streams of information, each dataset carrying traces of biological identity. But between raw data and usable insight lies a space of interpretation, where analysis must be careful, consistent, and precise. It is within this space that systems like Metapipeline-DNA begin to take shape.

Designed to automate and standardize genome sequencing analysis, Metapipeline-DNA addresses a challenge that has grown alongside the field itself. As sequencing technologies have advanced, the volume of data has expanded rapidly, bringing with it variability in how that data is processed. Different tools, workflows, and parameters can lead to differences in results, even when applied to the same underlying sequence.

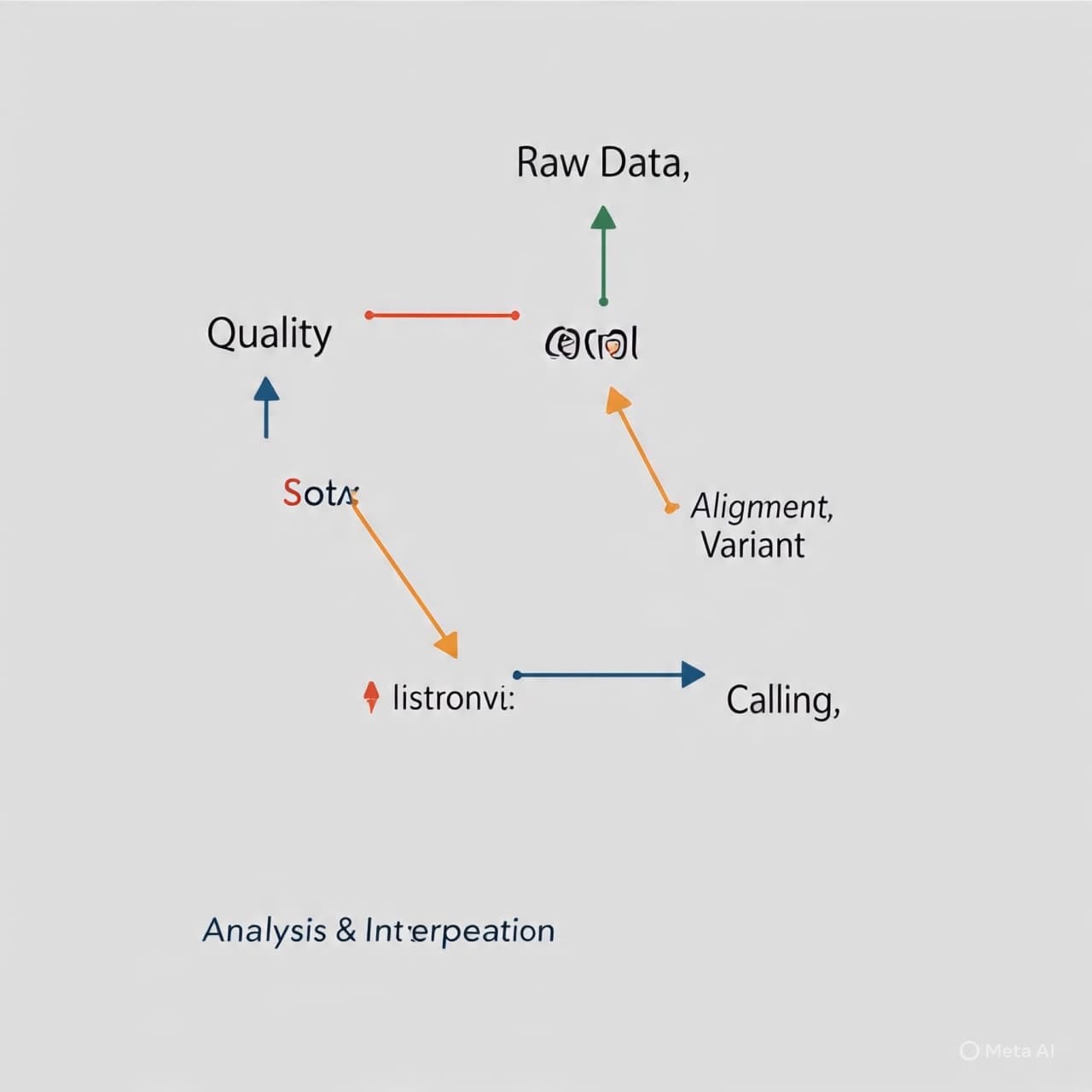

The introduction of a unified pipeline offers a different approach—one that seeks to reduce variation not by limiting inquiry, but by establishing a consistent framework. By automating key stages of analysis, from data preprocessing to alignment and interpretation, the system allows researchers to move through complex workflows with greater efficiency and reproducibility.

There is a quiet discipline in such standardization. It does not alter the genome itself, nor the fundamental questions being asked, but it shapes the path through which answers are reached. In doing so, it helps ensure that results can be compared across studies, across laboratories, and across time, forming a more coherent body of knowledge.

The implications extend beyond efficiency. In fields such as clinical genomics, where sequencing results may inform medical decisions, consistency becomes more than a technical preference—it becomes part of reliability. A standardized pipeline can support clearer interpretation, reducing uncertainty introduced by methodological differences.

At the same time, the system reflects a broader movement within science toward integration. As datasets grow in scale and complexity, the tools used to interpret them must evolve, not only in capability but in coordination. Automation, in this context, is less about replacing human insight and more about supporting it—allowing researchers to focus on questions rather than processes.

The flow of genomic data, once fragmented and variable, begins to take on a more continuous form. Each sequence, processed through a shared framework, contributes to a larger structure of understanding. It is a gradual alignment, where complexity is not reduced, but made navigable.

Metapipeline-DNA has been developed to automate and standardize genome sequencing analysis, aiming to improve reproducibility and efficiency across research and clinical applications. The system integrates multiple analytical steps into a unified workflow, supporting consistent processing of genomic data.

AI Image Disclaimer

Images are AI-generated and intended for conceptual illustration only.

Source Check

Nature Biotechnology Genome Biology ScienceDaily Phys.org MIT Technology Review