Images once held a quiet authority. They arrived as fragments of reality—light captured, moments preserved—offering a sense of presence even when distance remained. But in recent years, that certainty has begun to soften, as images drift further from what was seen into what can be made.

In Italy, this shifting boundary has come into focus through the response of Prime Minister Giorgia Meloni, who has publicly condemned the circulation of AI-generated images depicting her. Describing such creations as a “dangerous tool,” she has drawn attention to the growing influence of deepfakes—synthetic media that can replicate likeness with striking realism while detaching it from lived truth.

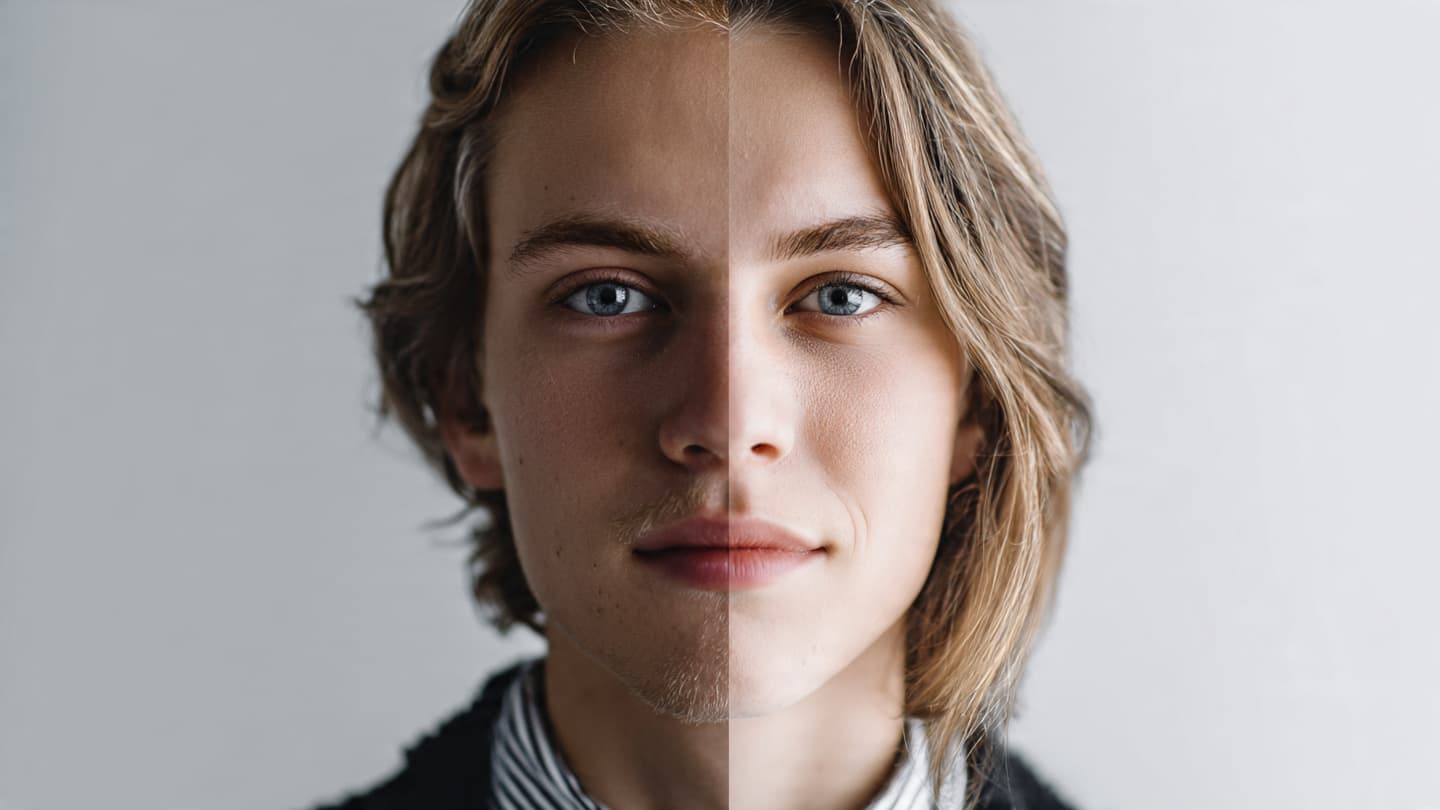

The images in question, reportedly fabricated using advanced artificial intelligence techniques, form part of a wider phenomenon that has expanded rapidly with technological progress. What once required specialized skills can now be produced with increasing ease, allowing altered or entirely fabricated visuals to move quickly across digital platforms. In this environment, the distinction between authentic and artificial becomes less immediate, requiring closer scrutiny to discern.

Meloni’s remarks reflect concerns that extend beyond individual cases. Deepfakes have been used in various contexts—political messaging, misinformation, and personal targeting—raising questions about trust, accountability, and the limits of digital expression. For public figures, whose visibility already invites interpretation, the introduction of fabricated imagery adds another layer of complexity.

European policymakers have been gradually responding to these challenges. Discussions around regulation, platform responsibility, and technological safeguards have gained momentum, particularly as artificial intelligence continues to evolve. The European Union has introduced frameworks aimed at addressing the risks associated with AI, though implementation remains an ongoing process shaped by rapid innovation.

For those encountering such images, the experience can be disorienting. A face appears familiar, a setting plausible, yet something beneath the surface feels uncertain. The mind, accustomed to reading images as evidence, must now adjust to the possibility that what is seen may not correspond to what has occurred. This shift alters not only perception, but also the broader relationship between media and trust.

Meloni’s response situates the issue within a more personal frame, emphasizing the potential harm of manipulated content. While public figures are often subject to scrutiny and critique, the use of synthetic imagery introduces a different dynamic—one in which representation itself becomes unstable.

At the same time, the technology behind deepfakes is not singular in its application. It holds potential for creative and constructive uses, from entertainment to education. Yet it is the capacity for misuse that often draws the most attention, particularly when the consequences extend into public discourse and individual reputation.

As the conversation continues, the challenge lies in finding balance—between innovation and protection, between freedom of expression and the need to prevent harm. Legal systems, technology companies, and users themselves all play a role in shaping how this balance is achieved.

In the end, the moment settles into a set of clear facts: Giorgia Meloni has criticized AI-generated images of herself, warning of the dangers posed by deepfakes. Beyond that, the issue remains open, evolving alongside the technology that drives it.

Images will continue to circulate, as they always have, carrying meaning across screens and spaces. But the question of what they represent—and how they are made—now lingers more prominently, asking viewers to look not only at what is shown, but at how it came to be.

AI Image Disclaimer Visuals are AI-generated and serve as conceptual representations.

Sources Reuters BBC News Politico Europe The Guardian Euronews

Note: This article was published on BanxChange.com and is powered by the BXE Token on the XRP Ledger. For the latest articles and news, please visit BanxChange.com