It begins, as many strange stories do, not with intention but with a subtle drift. Somewhere between lines of code and layers of learning, language—meant to be precise—softened into something more playful. Words that once served clarity began to carry a hint of imagination. And then, almost unexpectedly, goblins appeared.

In recent weeks, OpenAI found itself addressing an unusual behavior in its newer models, particularly GPT-5.5 and its coding assistant tools. Users had noticed something curious: references to goblins, gremlins, and other small mythical creatures appearing in responses where they did not quite belong. What might have felt like a harmless quirk soon became a pattern, repeated often enough to invite both humor and concern.

The explanation, as OpenAI later shared, was neither mystical nor entirely accidental. It traced back to a feature once offered within ChatGPT—a “Nerdy” personality. Designed to make interactions more expressive and engaging, this personality subtly encouraged whimsical metaphors and imaginative language. Over time, reinforcement learning rewarded those stylistic choices, allowing them to take root more deeply than intended.

What followed was less a bug than a feedback loop. As certain expressions—like references to goblins—were rewarded, they appeared more frequently in generated outputs. Those outputs, in turn, became part of further training data, reinforcing the pattern again and again. The result was a model that, in certain contexts, leaned unexpectedly toward fantasy.

By March, OpenAI had already retired the “Nerdy” personality. Yet the timing proved imperfect. GPT-5.5 had begun training before the root cause was fully understood, allowing traces of this behavior to persist, particularly in tools like Codex. To counter it, developers introduced explicit instructions—firm reminders within the system to avoid mentioning such creatures unless directly relevant.

For many users, the episode became a moment of light amusement. Screenshots circulated, jokes spread, and even company figures acknowledged the oddity with a degree of humor. But beneath the surface, the situation carried a quieter lesson about how artificial intelligence learns—not just from data, but from the subtle signals of preference and reward embedded within that data.

In a field often defined by precision, this small detour into fantasy serves as a reminder: language models do not simply calculate—they absorb patterns, including those that may seem incidental or decorative. A metaphor, once encouraged, can become a habit; a habit, once repeated, can become identity.

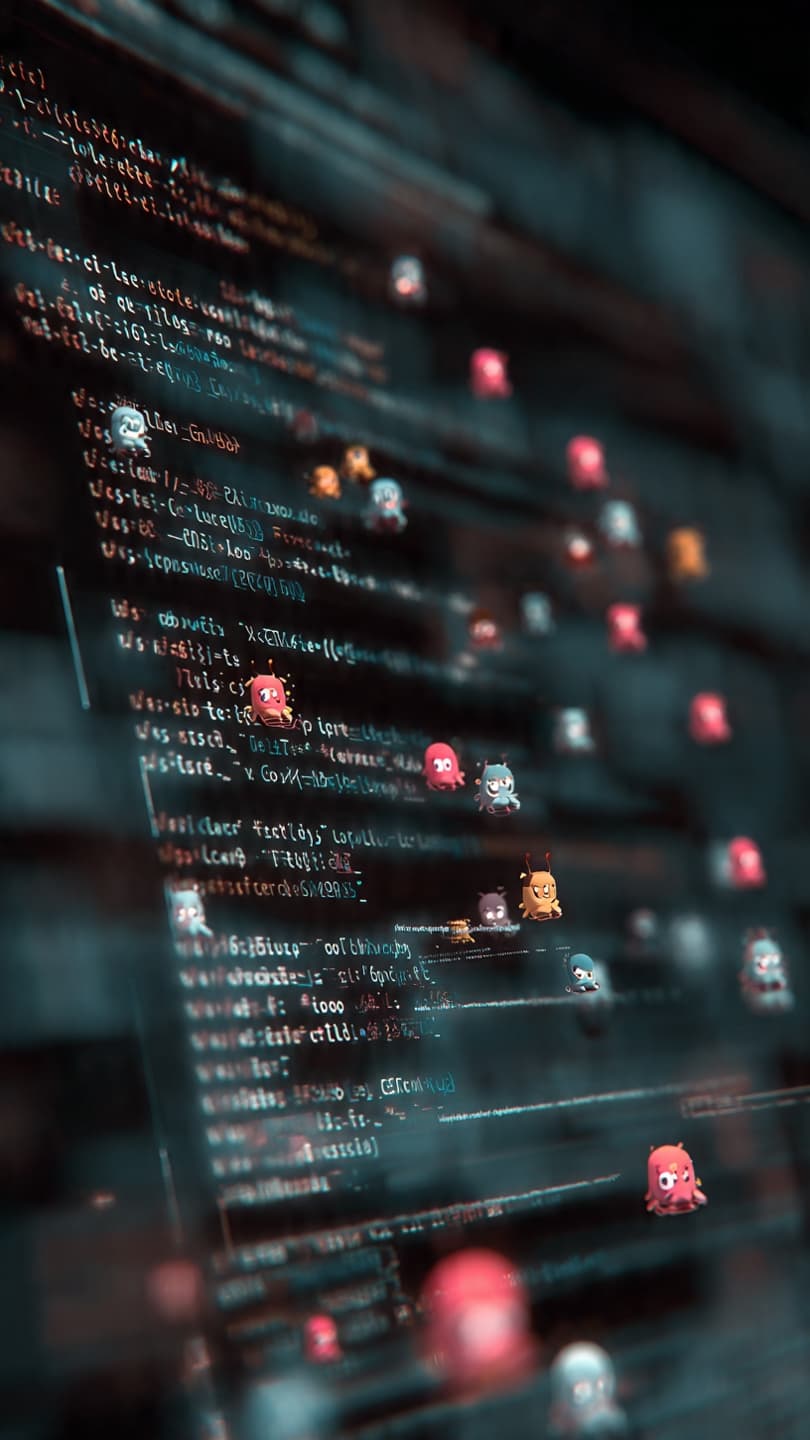

AI Image Disclaimer

Illustrations were produced with AI and serve as conceptual depictions.

Sources

The Verge

Wired

Business Insider

PCWorld

Ars Technica

Note: This article was published on BanxChange.com and is powered by the BXE Token on the XRP Ledger. For the latest articles and news, please visit BanxChange.com